Streamlining Biological Sample Processing for High-Throughput Research

When conducting large-scale biomedical studies, the way samples are processed can determine whether results are reliable, reproducible, and achieved on schedule. From blood fractionation to nucleic acid extraction, sample processing represents a critical stage where precision, speed, and quality assurance converge. Yet many research organisations face bottlenecks in this phase due to limited infrastructure, inconsistent methodologies, or insufficient scalability.

Processing tens to thousands of samples per day requires automated systems and scalable operations supported by robust quality management frameworks. The difference between manual bench work and industrialised sample processing often determines whether ambitious research timelines are met or missed entirely.

The Foundation of Quality Sample Processing

Automation and Standardisation

Manual sample processing introduces variability that can compromise entire studies. Human error, fatigue, and inconsistent technique create batch effects that confound results and reduce statistical power. High-speed centrifuges and bespoke liquid handling robots enable precise identification and separation of blood components including plasma, serum, buffy coat, and plasma-depleted red blood cells, ensuring every sample receives identical treatment.

Automated liquid handling systems eliminate pipetting errors whilst dramatically increasing throughput. These platforms enable pooling, aliquoting, and reformatting operations that would require weeks of manual labour to be completed in days. For studies involving thousands of participants, this automation transforms feasibility.

Comprehensive Tracking and Traceability

Sample processing generates complex data that must be captured, stored, and made accessible throughout the research lifecycle. Laboratory Information Management Systems track all processing activities from the moment samples arrive, receipting them and following their journey through every processing step and movement. This digital chain of custody proves essential for regulatory submissions and publication requirements.

Beyond basic tracking, sophisticated LIMS platforms continue holding data associated with consumed samples, allowing cohorts to be comprehensively tracked throughout their lifetime. This persistent data architecture supports longitudinal studies where samples may be processed in multiple phases across years.

Key Processing Capabilities for Modern Research

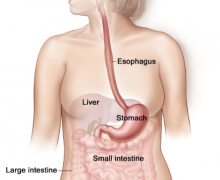

Blood Component Separation

Fractionation remains one of the most common processing requirements in biomedical research. The ability to efficiently separate whole blood into constituent parts enables researchers to preserve specific components optimally and conduct targeted analyses. When evaluating a Sample Processing Service, verify their fractionation protocols match your study design and ensure they can accommodate various tube types and anticoagulants.

Nucleic Acid Extraction

DNA and RNA extraction underpins genomics, transcriptomics, and pharmacogenomics research. Automated systems for extraction, quantification, and normalisation of DNA and RNA deliver highly consistent, repeatable results essential for downstream applications like sequencing and genotyping. Manual extraction methods simply cannot achieve the throughput and reproducibility required for population-scale studies.

Quality control at this stage determines whether expensive downstream analyses will succeed or fail. Concentration normalisation ensures uniform input into sequencing libraries or genotyping assays, preventing costly repeat experiments and data gaps.

Aliquoting and Sample Archiving

Creating multiple smaller samples from parent sources enables storage of multiple copies for RNA and DNA extraction, testing, and biobanking for future research. This foresight prevents irreplaceable samples from being exhausted by initial analyses, preserving material for emerging technologies and unanticipated research questions.

Proper aliquoting strategy balances current analytical needs against future flexibility. Experienced processing partners help researchers design tube layouts and volume distributions that maximise long-term value.

Quality Assurance and Regulatory Compliance

Sample processing for clinical trials demands particularly stringent quality systems. ISO 9001 certification and UKAS ISO 15189 accreditation ensure samples are processed to the highest standards, meeting requirements for clinical trial support. These certifications verify that quality management systems, staff competency programmes, and equipment validation protocols meet international benchmarks.

Regular internal audits, proficiency testing, and method validation studies provide ongoing assurance that processing quality remains consistent. For pharmaceutical sponsors and regulatory authorities, these quality frameworks offer confidence that sample data will withstand scrutiny during submissions and inspections.

Frequently Asked Questions

What throughput capacity should a processing facility offer?

Throughput requirements vary dramatically by study design. Large cohort studies may require processing thousands of samples daily, whilst smaller trials need flexible capacity. Leading facilities offer scalable operations that accommodate both high-volume steady-state processing and surge capacity for intensive collection periods.

How important is Laboratory Information Management System integration?

Essential. LIMS integration ensures sample tracking, data capture, and quality control documentation occur automatically rather than relying on manual record-keeping. This reduces errors, accelerates turnaround times, and creates audit trails required for regulatory compliance. Modern LIMS platforms also enable real-time visibility into processing status.

Can processing services accommodate custom protocols?

Yes. Whilst standardised protocols suit many applications, bespoke studies often require customised processing workflows. Experienced providers work collaboratively to develop, validate, and implement tailored protocols that align with specific research objectives whilst maintaining quality standards and regulatory compliance.

What turnaround times are achievable for large-scale processing?

For routine processing like blood fractionation or DNA extraction, samples collected in the morning can typically be processed and stored the same day. More complex protocols requiring multiple steps may extend to 48-72 hours. Clear communication about collection schedules and processing priorities helps facilities optimise workflows to meet project timelines.

How do processing facilities ensure sample integrity during workflows?

Multiple safeguards protect samples including temperature-controlled environments, cold chain management during transfers between workstations, validated equipment with regular maintenance, trained personnel following standard operating procedures, and continuous environmental monitoring. Quality management systems provide oversight ensuring these controls function reliably.

Conclusion

Sample processing represents the crucial bridge between collection and analysis, where careful methodology preserves biological integrity and enables reliable results. As research studies grow in scale and complexity, the infrastructure supporting sample processing must evolve accordingly. Automated systems, robust tracking platforms, and quality-assured workflows provide the foundation for successful biomedical research.

Partnering with experienced processing facilities early in study planning ensures protocols align with analytical requirements, regulatory expectations, and practical logistics. This proactive approach prevents costly modifications later and positions research programmes for success from sample collection through final analysis.